The Anatomy of Layer 1: Legacy Inertia and the Deprecation of the Crossover Cable

I have spliced and terminated more twisted-pair copper in the last seven days than in the preceding five years combined. This wasn’t an exercise in nostalgia; it was a strict requirement for an in-house R&D deployment focusing on latency reduction and physical security. In high-throughput, air-gapped environments, excess cable slack isn’t just an aesthetic failure—it introduces marginal signal degradation and presents a physical vulnerability vector. We required exact lengths, terminated on-site.

Stripping back the jacket and untwisting the pairs serves as a visceral reminder of the foundational infrastructure that supports our abstracted, software-defined networks. The physical arrangement of these eight copper wires is not an accident of history. It is the result of decades of industrial standardization, market dominance, and the eventual triumph of silicon over physical routing logic.

To understand modern Layer 1 infrastructure, we must first anchor it within the broader Open Systems Interconnection (OSI) model. While modern abstraction often reduces the network to a single “cloud” variable, infrastructure engineers operate across distinct planes of physical and logical constraints:

+-------+-------------------+---------------------------------------+

| Layer | OSI Nomenclature | Addressing & Delivery Mechanism |

+-------+-------------------+---------------------------------------+

| L3 | Network | IP Address (Global Logical Routing) |

| L2 | Data Link | MAC Address (Local Frame Switching) |

| L1 | Physical | Volts/Photons (Raw Bit Transmission) |

+-------+-------------------+---------------------------------------+Layer 1 (L1) is the domain of raw physics—voltage differentials on copper or photon pulses through glass. Layer 2 (L2) introduces the first logical wrapper, assembling those pulses into discrete frames governed by hardware MAC addresses. Layer 3 (L3) escapes the local broadcast domain entirely, routing IP packets across disparate, global networks. The legacy of the crossover cable is essentially the story of a physical (L1) hardware hack designed to solve a logical (L2) switching problem.

With this taxonomy established, we can audit the architectural decisions that brought us from shared-bus coaxial cables to the Auto MDI-X standards we rely on today.

The Coaxial Era: Shared Mediums and Physical Collisions

Before the ubiquity of the 8P8C modular connector (colloquially and incorrectly referred to as RJ45), Ethernet was defined by thick and thin coaxial cables: 10BASE5 (Thicknet) and 10BASE2 (Thinnet).

These early IEEE 802.3 specifications operated on a bus topology. Every node on the network shared the exact same physical medium. This was a half-duplex environment governed by CSMA/CD (Carrier-Sense Multiple Access with Collision Detection). If two nodes transmitted simultaneously, a voltage spike (collision) occurred on the copper, forcing a randomized back-off algorithm before retransmission.

The physical infrastructure of this era was highly fragile. A single missing 50-ohm terminator at the end of a Thinnet run, or a poorly seated “vampire tap” on a Thicknet backbone, would cause signal reflections that took down the entire collision domain. The operational overhead of troubleshooting a single point of failure across a distributed physical bus was a significant driver of infrastructure downtime.

The architectural imperative was clear: networking needed to move from a shared physical bus to a star topology, isolating collision domains and improving fault tolerance.

The Physics of the Twist: Crosstalk and Infrastructure TCO

The introduction of 10BASE-T (IEEE 802.3i) in 1990 shifted the medium from coaxial to unshielded twisted pair (UTP).

Sending high-frequency electrical signals down parallel copper wires creates an electromagnetic field that induces a signal in the adjacent wires. This is known as Crosstalk—specifically NEXT (Near-End Crosstalk) at the point of termination, and later, AXT (Alien Crosstalk) between neighboring cables in high-density bundles.

The engineering solution to mitigate this is differential signaling combined with twisting the pairs. By sending the identical signal in inverted phases across the twisted pair, the resulting electromagnetic fields cancel each other out, while external noise applied equally to both wires is ignored by the receiver.

However, as we moved from Cat5e (100 MHz) to Cat6 (250 MHz) and Cat6A (500 MHz) to support 10 Gigabit throughput, the physical constraints of the copper became apparent. Simply twisting the wires tighter wasn’t enough. Manufacturers had to introduce physical separation—the internal plastic “spline” or cross-web—and increase the wire gauge from 24 AWG to 23 AWG.

This is where the physics of tech directly impacts infrastructure TCO. A Cat6A cable has a significantly larger outer diameter and a much stricter bend radius than Cat5e. In enterprise data centers, this directly impacted conduit fill ratios. Upgrading Layer 1 suddenly required replacing the physical containment systems (cable trays and conduits) because the thicker cables literally would not fit.

The T568A/B Paradox: Market Gravity vs. Regulatory Intent

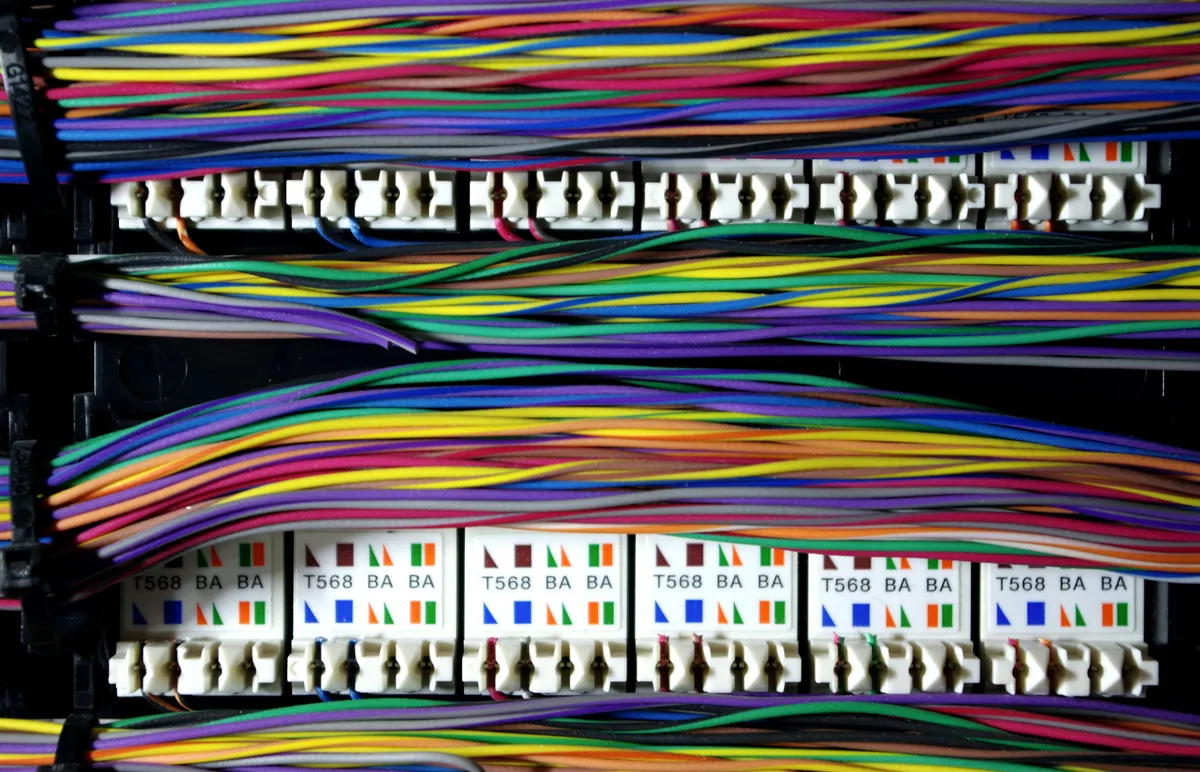

With UTP established, the industry adopted the 8-Position 8-Contact (8P8C) modular connector, borrowing heavily from existing telecommunications form factors. However, standardizing the physical pinout of these eight wires resulted in a schism that persists to this day: TIA/EIA-568-A versus TIA/EIA-568-B.

From a signal integrity perspective, there is zero electrical difference. The difference lies entirely in the assignment of Pairs 2 and 3 to specific pins.

+-----+--------------------+--------------------+

| Pin | T568A Standard | T568B Standard |

+-----+--------------------+--------------------+

| 1 | White/Green (RX+) | White/Orange (TX+) |

| 2 | Green (RX-) | Orange (TX-) |

| 3 | White/Orange (TX+) | White/Green (RX+) |

| 4 | Blue | Blue |

| 5 | White/Blue | White/Blue |

| 6 | Orange (TX-) | Green (RX-) |

| 7 | White/Brown | White/Brown |

| 8 | Brown | Brown |

+-----+--------------------+--------------------+

*TX/RX notation assumes an MDI (PC/Router) interface.Why do two identical standards exist? The answer is a case study in Legacy Inertia.

T568A was the preferred standard of the US Federal Government, designed to provide backward compatibility with older 1-pair and 2-pair USOC (Universal Service Ordering Code) voice systems.

Conversely, T568B mirrored the older AT&T 258A color code. Because AT&T had already deployed massive amounts of 258A infrastructure across corporate America, T568B possessed overwhelming market gravity. It was cheaper for enterprise organizations to adopt the standard that matched their existing PBX wiring than to conform to the federal recommendation.

Today, T568B remains the de facto industrial standard not because it is technically superior, but because industrial momentum consistently outpaces regulatory preference.

The MDI/MDI-X Mandate: The Era of the Crossover Cable

The transition to 10BASE-T and 100BASE-TX (Fast Ethernet) introduced a strict directional logic to the physical layer. The hardware was categorized into two port types:

- MDI (Medium Dependent Interface): End-user devices (PCs, servers, routers). These devices transmit (TX) on Pins 1/2 and receive (RX) on Pins 3/6.

- MDI-X (MDI Crossover): Infrastructure devices (switches, hubs). These devices transmit on Pins 3/6 and receive on Pins 1/2.

If you connect an MDI device to an MDI-X device using a “straight-through” cable (e.g., T568B on both ends), the TX pairs align perfectly with the RX pairs.

However, a fundamental architectural limitation arises when connecting “like” devices—MDI to MDI (PC to PC) or MDI-X to MDI-X (Switch to Switch).

[MDI Device - PC] [MDI Device - PC]

TX (Pins 1/2) -------------------------> TX (Pins 1/2) [COLLISION]

RX (Pins 3/6) <------------------------- RX (Pins 3/6) [COLLISION]The Manufacturer’s Logic

To solve this, the industry introduced the Crossover Cable—a cable terminated with T568A on one end and T568B on the other. This physically crossed the TX and RX pairs within the cable itself.

[MDI Device - PC] (Crossover) [MDI Device - PC]

TX (Pins 1/2) ---------\ /-------------> RX (Pins 3/6) [LINK ESTABLISHED]

X

RX (Pins 3/6) <--------/ \-------------- TX (Pins 1/2) [LINK ESTABLISHED]From a hardware manufacturing perspective in the 1990s, this was a highly rational decision. Silicon was expensive; copper was cheap. By forcing the physical cable to handle the TX/RX inversion, manufacturers avoided the cost of implementing complex detection and switching logic within the Network Interface Card (NIC) or switch PHY (Physical Layer) chips.

The negative outcome was entirely operational. It introduced a massive TCO burden on field technicians and data center operators. Identifying a crossover cable required visual inspection of the terminating pins. A misidentified cable in a high-density switch stack led to immediate link failure, increasing Mean Time to Resolution (MTTR) during outages. The industry traded hardware efficiency for operational friction.

Silicon Deprecates Copper: Auto MDI-X

The requirement for crossover cables was ultimately deprecated not by a change in cable standards, but by the relentless advancement of PHY silicon.

Developed by HP engineers and subsequently integrated into the IEEE 802.3ab (1000BASE-T Gigabit Ethernet) standard in 1999, Auto MDI-X eliminated the need for physical crossover wiring.

An Auto MDI-X capable interface utilizes an algorithm to detect the link pulse of the connected device. If it detects a TX-to-TX collision, the PHY chip electronically swaps the TX and RX pairs internally in a matter of milliseconds. The silicon dynamically adapts to the physical medium, rendering the internal wiring of the cable irrelevant for basic connectivity.

(Note: 1000BASE-T Gigabit Ethernet actually utilizes all four pairs for bidirectional transmission using PAM-5 encoding and DSP echo cancellation, fundamentally changing the TX/RX paradigm anyway. However, Auto MDI-X ensures backward compatibility when negotiating down to 10/100 speeds).

Abstraction as a Service

The lifecycle of the crossover cable provides a critical framework for evaluating modern infrastructure: Hardware variance must be abstracted by logic.

The era of the crossover cable failed because it required human operators to manage logic at the lowest possible layer (physical wiring). The TCO of managing this physical variance vastly exceeded the cost of embedding the detection logic directly into the silicon.

As we evaluate contemporary architectures—whether it is routing complex BGP policies, managing Kubernetes ingress, or deploying zero-trust network access—the principle remains identical. If your deployment requires manual “splicing” of custom configurations for every new endpoint, you are generating operational debt.

Resilient systems standardize the physical (or foundational) layer and handle the variance in the logic layer. The goal of a Systems Architect is not to stock both straight-through and crossover cables; the goal is to mandate Auto MDI-X hardware, allowing the system to silently self-correct beneath the operator’s line of sight.

References & Architecture Context

- ANSI/TIA-568.2-D Standard: The defining regulatory architecture for balanced twisted-pair telecommunications cabling, standardizing the T568A and T568B pinout paradigms. Review the standard overview (TIA).

- IEEE 802.3ab-1999 (1000BASE-T): The physical layer specification for Gigabit Ethernet over four pairs of Category 5 UTP, formally introducing the necessity of Digital Signal Processing (DSP) and laying the groundwork for Auto MDI-X. Access the IEEE Standard.

- US Patent US6175865B1 (Auto MDI-X): Automatic detection and resolution of straight-through vs. cross-over cables. Authored by HP engineers Dan Dove and Daniel Maltby, this patent documents the exact mechanism that deprecated manual crossover logic. Read on Google Patents.